RESEARCH ARTICLE

An Entropy Rate Theorem for a Hidden Inhomogeneous Markov Chain

Yao Qi-feng1, Dong Yun2, Wang Zhong-Zhi1, *

Article Information

Identifiers and Pagination:

Year: 2017Volume: 8

First Page: 19

Last Page: 26

Publisher Id: TOSPJ-8-19

DOI: 10.2174/1876527001708010019

Article History:

Received Date: 02/02/2017Revision Received Date: 03/07/2017

Acceptance Date: 16/08/2017

Electronic publication date: 30/09/2017

Collection year: 2017

open-access license: This is an open access article distributed under the terms of the Creative Commons Attribution 4.0 International Public License (CC-BY 4.0), a copy of which is available at: https://creativecommons.org/licenses/by/4.0/legalcode. This license permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Abstract

Objective:

The main object of our study is to extend some entropy rate theorems to a Hidden Inhomogeneous Markov Chain (HIMC) and establish an entropy rate theorem under some mild conditions.

Introduction:

A hidden inhomogeneous Markov chain contains two different stochastic processes; one is an inhomogeneous Markov chain whose states are hidden and the other is a stochastic process whose states are observable.

Materials and Methods:

The proof of theorem requires some ergodic properties of an inhomogeneous Markov chain, and the flexible application of the properties of norm and the bounded conditions of series are also indispensable.

Results:

This paper presents an entropy rate theorem for an HIMC under some mild conditions and two corollaries for a hidden Markov chain and an inhomogeneous Markov chain.

Conclusion:

Under some mild conditions, the entropy rates of an inhomogeneous Markov chains, a hidden Markov chain and an HIMC are similar and easy to calculate.

1. INTRODUCTION

A hidden Markov model (HMM) is a statistical Markov model in which the system being modeled is assumed to be a Markov process with unobserved (hidden) states. Hidden Markov models are especially known for their application in temporal pattern recognition such as speech, handwriting, gesture recognition [1], part-of-speech tagging, musical score following [2], partial [3] and bioinformatics. Hidden Markov Chain (HMC) is derived from context mentioned hidden Markov model (HMM).

The entropy rate or source information rate of a stochastic process is, informally, the time density of the average information in a stochastic process and it plays great roles in information theory. Therefore, in recent years, many approaches have been adopted to try to improve theoretical integrity about the entropy rate of a hidden Markov chain. For instance, Ordentlich et al.[4] used Blackwell’s measure to compute the entropy rate and Egner et al.[5] introduced variation bounds. Ordentlich et al.[4], Jacquet et al.[6], Zuk et al.[7] etc studied the variation of the entropy rate as parameters of the underlying Markov chain. Liu et al.[8] have given some limit properties of relative entropy and relative entropy density and Shannon-McMillan theorem for inhomogeneous Markov chains.

Motivated by the work above, the main object of our study is to extend some results mentioned above to HIMC and establish an entropy rate theorem under some mild conditions. For the definitions and methods, we learn from W.G. Yang et al.[9] and G.Q. Yang et al.[10]. W.G. Yang et al.[9] proved a convergence theorem for the Cesaro averages for inhomogeneous Markov chains, gave a limit theorem of one functional of inhomogeneous Markov chains and discussed the application of this limit theorem on the Markovian decision process and the information theory. G.Q. Yang et al.[11] introduced the notion of countable hidden inhomogeneous Markov models, obtained some properties for those Markov models and established two strong laws of large numbers for countable hidden inhomogeneous Markov models and its corollaries.

The remainder of the paper is organized as follows: Section 2 provides a brief description of the HIMC and related Lemmas. Section 3 gives the main results and proofs.

2. PRELIMINARIES

In this section we have some fundamental definitions and related preliminaries that are needed in the next section.

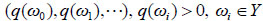

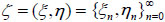

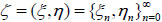

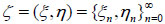

Let ζ = (ξ, η) be random vector, where ξ = (ξ 0, ξ1, ···), η= (η 0,η1, ···) are two different stochastic processes, η is hidden (η takes values in Y = {ω 0, ω1, ···}), and ξ is observable (ξ takes values in set X = {θ 0, θ1, ···}).

We first recall the definition of a hidden inhomogeneous Markov chain (HIMC) ζ = (ξ, η) =  with hidden chain

with hidden chain  and observable process

and observable process  .

.

Definition 1. [11] The process ζ = (ξ, η) is called an HIMC if it follows the following form and conditions:

1. Assume that a given time inhomogeneous Markov chain takes value in state space Y and its starting distribution be

|

(2.1) |

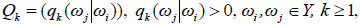

and transition matrices

|

(2.2) |

Where

|

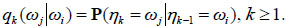

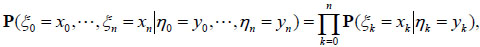

2. For any n,

|

(2.3) |

Some necessary and sufficient conditions for (2.3) have been proved by G.Q. Yang et al [11].

a. A necessary and sufficient condition for (2.3) holds that for any n, we have

|

(2.4) |

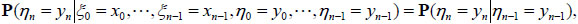

b. ζ = (ξ, η) is a inhomogeneous hidden Markov model if and only if  n > 0

n > 0

|

(2.5) |

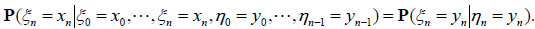

c. ζ = (ξ, η) is a inhomogeneous hidden Markov model if and only if  n > 0

n > 0

|

(2.6) |

|

(2.7) |

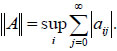

Definition 2. Let h = (h 0, h1, ···) be a vector, define the norm of h by

|

Let A = (aij) be a square matrix, defining the norm of A by

|

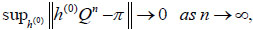

Definition 3. [11] Let Q be a transition matrix of a homogeneous Markov chain. We call Q strongly ergodic, if there exists a probability distribution π = (π 0, π1, π2, ···) on Y which satisfies that

|

(2.8) |

where h(0) is a starting vector, Obviously, (2.8) implies π Q = π, and we call π the stationary distribution determined by Q.

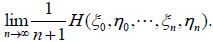

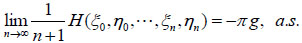

Definition 4.

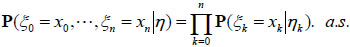

[9] Let  be a hidden inhomogeneous Markov chain defined as above and H (ξ0 , η0 , ···, ξn, ηn) be the entropy of ζ. The entropy rate of ζ is defined by

be a hidden inhomogeneous Markov chain defined as above and H (ξ0 , η0 , ···, ξn, ηn) be the entropy of ζ. The entropy rate of ζ is defined by

|

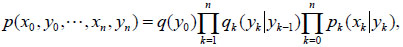

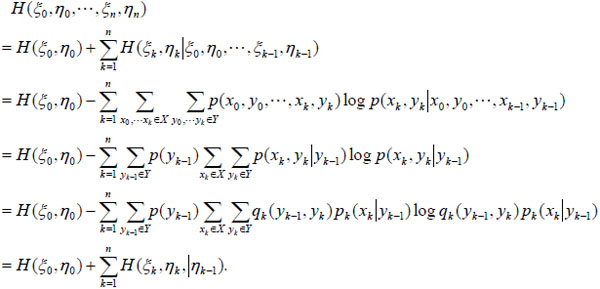

For simplicity, we use the natural logarithm here, thus the entropy is measured by NATS. From the definitions of entropy and HIMC, we have

|

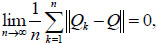

Lemma 1. [10] Let η = (η 0, η1, ···) be an inhomogeneous Markov chain with transition matrices {Qn, n≥1}. Let Q be a periodic strong ergodic random matrix. Let c= (c1, c2, ···) be a left eigenvector of Q and the unique solution of equations cQ = c and ∑jcj= 1. Let B be a constant random matrix, where each row of B is c. If

|

(2.9) |

then

|

(2.10) |

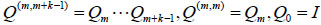

holds for any m N, where

N, where  (I is the identical matrix).

(I is the identical matrix).

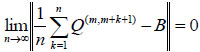

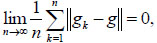

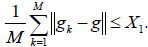

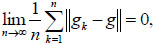

Lemma 2.Let {gn, n ≥ 1} and g be column vectors with real number entries, if

|

(2.11) |

then there exists a subsequence {gnk, k ≥ 1} of {gn, n ≥ 1} such that

|

(2.12) |

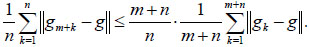

Proof. Assume (2.11) holds, We have

|

(2.13) |

Hence

|

(2.14) |

holds for any m  N.

N.

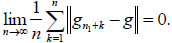

choose Xk > 0 with Xk ↓ 0, by (2.14),  M

M  N , such that

N , such that

|

(2.15) |

Therefore, there exists n1 ≤ M such that ||gn1−g|| ≤ X1, by eq. (2.14), we have

|

(2.16) |

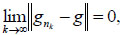

Therefore, there exists n2 ≥ n1 such that ||gn2−g|| ≤ X2. Generally, we can get a subsequence {gnk, k ≥ 1} of {gn, n ≥ 1} such that || gnk − g || ≤ Xk. (2.12) follows immediately.

3. MAINSTREAM

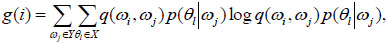

Theorem 1.Let  be an HIMC defined as above, Q = (q(ωi, ωj)) be another transition matrix and assume that Q is periodic strongly ergodic. Let

be an HIMC defined as above, Q = (q(ωi, ωj)) be another transition matrix and assume that Q is periodic strongly ergodic. Let

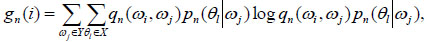

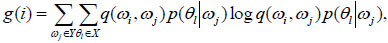

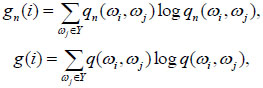

|

(3.1) |

|

(3.2) |

where, gn (i), g(i) are the i th-coordinate of column vectors gn and g resp., {||gn||, n ≥ 1} are bounded. If

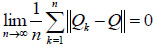

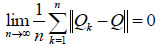

|

(3.3) |

and

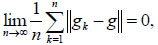

|

(3.4) |

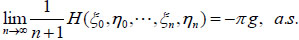

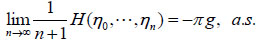

then the entropy rate of ζ =(ξ, η) exists and

|

(3.5) |

where, π = (π 0, π1, π2···) is the unique stationary distribution determined by Q.

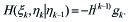

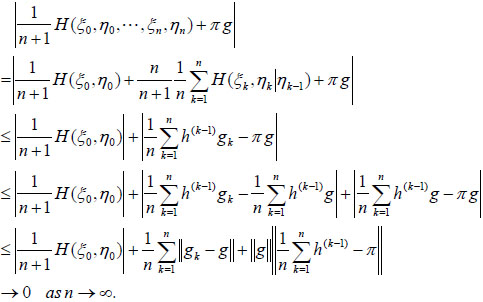

Proof. Let h(k−1) be a row vector with the ith coordinate P (ηk−1 = ωi). Hence, using the properties and the definition of gk, we have

|

(3.6) |

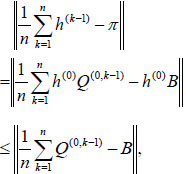

Just take B to be a constant random matrix whose rows are equal to π. Note that π = h(0)B, where h(0) is a starting distribution of the Markov chain. Since

|

(3.7) |

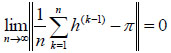

where, Q(0, k−1) = Q1···Qk−1, Q(0, 0) = I (I is the identical matrix), By Eqs. (3.3) and (3.7) and

Lemma 1, we have

|

(3.8) |

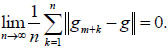

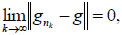

By Eq. (3.4) and Lemma 2, there exists a subsequence  of

of  such that

such that

|

(3.9) |

hence ||g|| is finite. By Eq. (3.8) and the entropy property, we have

|

This completes the proof of Theorem 1.

Remark. Theorem 1 gives a method to compute the entropy rate of an HIMC under some mild conditions.

Corollary 1.Let  be a hidden homogeneous Markov chain with periodic strongly ergodic transition matrix Q(q(ωi, ωj)) and transition probability p(θl|ωj), where ωi, ωj

be a hidden homogeneous Markov chain with periodic strongly ergodic transition matrix Q(q(ωi, ωj)) and transition probability p(θl|ωj), where ωi, ωj Y, θl

Y, θl X. Let

X. Let

|

(3.10) |

where, g(i) is the i th-coordinate of column vectors g, ||g|| is bounded. Then the entropy rate ofζ = (ξ, η) exists and

|

(3.11) |

where, π = (π 0, π1, π2···) is the unique stationary distribution determined by Q.

As a corollary we can get the following theorem of W.G. Yang et al.[9] for an inhomogeneous Markov chain.

Corollary 2.

[9] Let  be an inhomogeneous Markov chain, Q = (q(ωi, ωj)) be another transition matrix and assume that Q is periodic strongly ergodic. Let

be an inhomogeneous Markov chain, Q = (q(ωi, ωj)) be another transition matrix and assume that Q is periodic strongly ergodic. Let

|

where, gn(i), g(i) are the i th-coordinate of column vectors gn and g resp., {||gn||, n≥1} are bounded. If

|

and

|

then the entropy rate of η exists and

|

where, π = (π 0, π1, π2···) is the unique stationary distribution determined by Q.

CONCLUSION

We quote the definition of HIMC, norm, stationary distribution and entropy rate which contain rich content. Lemma 1 gives a limit property of state transition matrices of an inhomogeneous Markov chain. Lemma 2 gives a limit property of vector series. The process of proof references the situation of inhomogeneous Markov chains. Key to the proof is that Cesaro average of state distribution of a Markov chain takes stationary distribution as the limit. Under some mild conditions, the entropy rates of an inhomogeneous Markov chain, a hidden Markov chains and a HIMC are similar and easy to calculate.

CONSENT FOR PUBLICATION

Not applicable.

CONFLICT OF INTEREST

The authors declare no conflict of interest, financial or otherwise.

ACKNOWLEDGEMENTS

The authors are thankful to the referees for their valuable suggestions and the editor for encouragement.